Elevator pitch

Time plays an important role in both the design and interpretation of evaluation studies of training programs. While the start and duration of a training program are closely linked to the evolution of job opportunities, the impact of training programs in the short and longer term changes over time. Neglecting these “dynamics” could lead to an unduly negative assessment of the effects of certain training schemes. Therefore, a better understanding of the dynamic relationship between different types of training and their respective labor market outcomes is essential for a better design and interpretation of evaluation studies.

Key findings

Pros

For improving the understanding of how training programs work, dynamic evaluation approaches that consider changes and impacts of training programs over time are useful.

Job-search training is a low-cost intervention relative to other active labor market policies.

Job-search training reduces the duration of unemployment for the individual.

Occupational skills training has positive long-term effects on employment stability and earnings, which can persist over many years.

Cons

The dynamics and impacts of training participation may be misrepresented in static evaluation approaches, which could underestimate training impacts.

Job-search training has no strong long-term effects on employment and earnings.

Occupational skills training ranks among the most expensive active labor market programs.

Occupational skills training initially prolongs unemployment.

Author's main message

Of the many “active” labor market policies designed to help unemployed people find work, occupational skills training ranks amongst the most expensive. However, although skills training is costly to implement and can prolong unemployment, the long-term improvements in employment stability and earnings can persist over many years. In contrast, job-search-oriented training is a relatively low-cost intervention and helps to identify suitable employment opportunities for individuals more quickly. Policymakers should be aware that while job-search training is the best option to activate job-seekers in the short term, investment in occupational skills training is more effective for addressing individual welfare issues and structural skills mismatch in the labor market in the long term.

Motivation

Training programs are an important instrument in the tool box of “active labor market policies”; that is, policies designed to help unemployed people find work. Periods of unemployment, or weak attachment to the labor force, may be accompanied by a deterioration in job-search skills, as well as in occupational knowledge and competencies. Training programs can help prevent, or reverse, a situation in which self-sustaining employment appears to be no longer achievable. Short-term, job-search-oriented programs focus on speedy job entry, whereas longer-term training supports the comprehensive development of occupational skills.

Given the multiple goals of labor market policy, and the various methods and approaches for achieving them, the relative success of these different approaches—skills development or “work-first” strategies—remains a controversial issue, and assessing their effectiveness can be a complex process.

A better understanding of the dynamic relationship between different types of training and their respective labor market outcomes is key for the design and interpretation of evaluation studies. This perspective on training is essential for formulating policy recommendations that will allow decision makers to optimally balance their choice of different types of training programs.

Discussion of pros and cons

Policy measures for the unemployed are designed to provide workers with an insurance against the risk of loss of earnings and consumption potential during periods of unemployment, and to provide job-seekers with the right incentives to take up employment again. Most existing unemployment measures include a mix of passive policies, e.g. benefit schemes that compensate for the earnings loss, and active policies that are designed to help the unemployed back into work. There are two main categories of active employment policy: (1) activation strategies that focus on improving the job-search activities of the unemployed (either with direct monitoring or training on job-search skills); and (2) human-capital development strategies that provide training in occupational skills in order to improve the productivity and employability of the unemployed.

A major advantage of job-search training is its low cost. However, a downside is that the limited set of skills provided may not be sufficient to raise employment stability and earnings in the long term. More comprehensive training programs appear to be better suited to improve long-term outcomes and address the structural mismatch between worker skills and employer demand. However, such programs rank amongst the most expensive active policies, as they are costly to run and because the period of unemployment (and in many cases, unemployment benefit payment) is prolonged.

From a theoretical perspective, the application of training policies should depend on the specific skills of a job-seeker and how their skills evolve or develop during the course of unemployment [2]. Participating in job-search training becomes more worthwhile following a period of unsuccessful searching, as it can help to prevent, or reverse, a decline in the effectiveness of the search. Participating in intensive skills training is most beneficial during the early stage of unemployment if it is clear that the occupational skills of a worker have become obsolete when they were made unemployed, e.g. because their skills are not relevant for other jobs. If, however, occupational skills depreciate only slowly during the course of unemployment, intensive skills training would be better targeted at the long-term unemployed.

Empirical evaluations provide evidence on the effectiveness of training programs that can guide decision makers to choose optimally between different policy options. State-of-the-art evaluations of training programs are based on control-group designs, in which the labor market outcomes of job-seekers assigned to a training program are compared to the outcomes of job-seekers who are not assigned to the program. In social experiments, job-seekers are randomly assigned to “treatment” and “control” groups. In contrast, studies based on observational data rely only on statistical methods to generate the treatment and control groups.

Causal relationships and time play an important role in the design and interpretation of evaluation studies. While the start and duration of a training program are both linked to the emergence of job opportunities before and during the period of training, the effects of the training can change over time. Participating in training programs can directly affect the current job-search and labor market outcomes of the participants. In contrast, the longer-term effects of the newly acquired skills resulting from training may materialize only gradually over time.

Empirical evidence on the dynamics of training impacts

Empirical evidence on the effectiveness of training programs has increased significantly since the mid-1990s, mainly in response to a growing political demand for scientific evaluations of labor market policies, particularly in Europe. One notable study summarizes the evaluation results from more than 200 econometric evaluations of active labor market programs worldwide, of which around 50% include results on skills-intensive training and 15% on job-search training [3]. The study distinguishes between short-term impacts (less than a year) and long-term impacts (more than two years after the assignment of the program to an individual). On average, the impacts of these programs are close to zero in the short term and become more positive in the long term. The precise time profiles, however, vary across program types.

In the case of training programs, there is initially a phase of negative impact (i.e. the so-called “lock-in” effect when, during program participation, participants do not move into regular employment), which is directly related to the duration of the program. Longer programs obviously exhibit longer and deeper lock-in effects than shorter ones. The lock-in effect reflects the investment component of programs aiming at human capital, or skills, development. In the short term, participants in comprehensive training concentrate on improving their occupational skills and, as a result, miss out on job opportunities. However, to the extent that comprehensive training is effective, the labor market outcomes of participants improve once the program is completed. Positive program impacts in the medium and long term reflect the return to the training investment, as well as the return to work experience. In contrast, job-search training programs are usually of short duration and aim at quick re-employment rather than human-capital development. If anything, the lock-in effect is not very pronounced in job-search training. If job-search training is effective, positive impacts appear instantaneously. Since the investment in occupational skills is negligible with job-search schemes, there is less scope for positive impacts over the medium and long term.

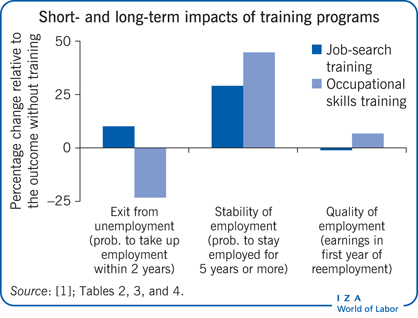

A few studies directly compare different types of training programs and also evaluate their impacts over longer-term horizons of up to ten years [1]. These studies suggest that job-search training raises employment prospects in the short term, whereas more intensive skills training initially reduces employment rates and leads to earnings losses. Over the longer term, comprehensive skills training tends to be more effective than job-search training. The positive effects of comprehensive training persist over periods as long as eight or nine years, whereas the effects of job-search training can disappear after a couple of years.

The vast majority of evaluation studies focus on cross-sectional outcomes, i.e. outcomes that can be measured at one point in time, such as employment rates and average earnings. A few studies consider dynamic outcomes, such as the remaining time in unemployment or the duration of subsequent employment. A focus on dynamic outcomes is necessary in order to better understand how training programs work [4]. If participation in a particular program increases the employment rate at a given point in time, one does not know whether the program generates its beneficial effects through shortening unemployment spells or through increasing employment stability. This knowledge is important though in order to better understand the extent to which training programs activate job-seekers or improve their productivity when re-employed.

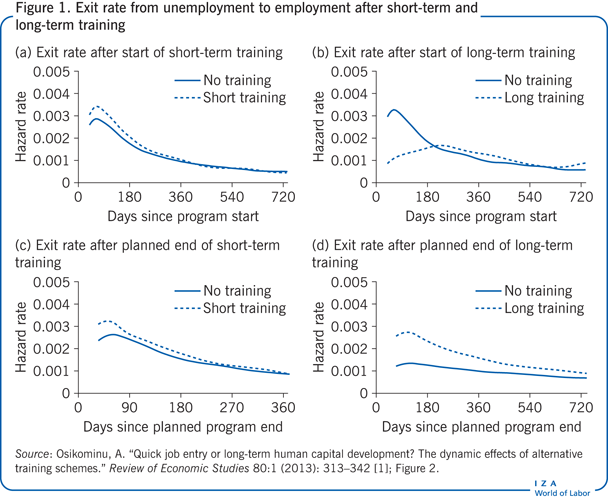

A recent study investigates the dynamic effects of short-term, job-search-oriented training and long-term, human-capital-intensive training in more detail [1]. It considers impacts on time until job entry, stability of employment, and earnings. The analysis uses unique data for Germany, where both types of programs are operated simultaneously (Figure 1). The results of this study are broadly in line with the limited available evidence for other European countries and the US.

Panels (a) and (b) in Figure 1 show how the exit rates evolved since the start of the program, while panels (c) and (d) show how they evolved from the planned program end onwards. Panel (a) suggests that job-seekers exit unemployment at a faster rate with short-term training than without training, from the start of the program onwards. A participation in short-term training, which has a median planned duration of four weeks, has no noticeable lock-in effect. Rather, it raises the job-finding rates of participants instantly. The effect peaks at about 65 days following the start of the program, when the exit rate “with” short-term training exceeds that “without” by 18%. However, this advantage vanishes relatively quickly. Panel (c) shows that the difference between the exit rates with, and without, short-term training is already very small three months after the planned program end. In total, short-term training reduces the remaining time in unemployment by three weeks.

The pattern is different for long-term, human-capital-intensive training that has a median planned duration of 201 days. Panel (b) of Figure 1 suggests that, during participation, people exit unemployment at a much lower rate compared to the situation of non-participation. Only after somewhat more than 200 days since program start, by which time the majority of participants have completed their training, does the exit rate increase to a level slightly above that without participation. However, concentrating on the period following the scheduled program end, in panel (d) of Figure 1, long-term training has strong and persistent positive effects on the exit rate to employment. During the first three months after the planned program end, the exit rate out of unemployment “with” participation is about twice as high as “without” participation. This effect slowly decreases over time. One year after the planned program end, the exit rate with participation is still around 40% higher than without training. In total, long-term training programs initially prolong the remaining time in unemployment by about three months.

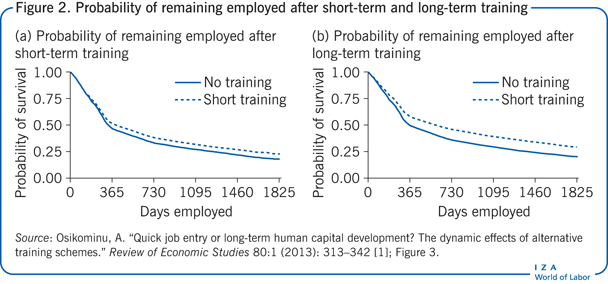

Figure 2 displays the impacts of training on the probability of remaining employed [1]. It shows that for both training programs the probability of remaining employed is higher “with” training than “without” at longer-elapsed durations. The vertical difference between the survival probabilities, with and without training, increases up to approximately 1.5 years after the beginning of the employment period and remains constant thereafter. After five years, 22% of the people who participated in short-term training have been continuously employed, compared to 17% in the situation without training. After five years, 29% of the people who participated in long-term training have not left their first employment, compared to 20% in the situation without training. In the first year of employment, long-term training increases earnings by 7% on average, whereas short-term training has zero effect on earnings [1].

The study further looks into the different effects of training along a number of personal characteristics [1]. Participants without a formal education degree and people previously working in low- and medium-skilled manual occupations realize particularly high earnings gains after long-term training, in the order of 10–19%. Participants previously working in medium-skilled analytic and interactive jobs reap substantial gains in terms of employment stability.

In sum, long-term training programs foster the occupational advancement of different groups of job-seekers, including those with weak labor market prospects. The unemployment-prolonging effect of long-term training is smaller for people with a lower chance of exiting unemployment on their own, such as the long-term unemployed and low-skilled. Job-search training reduces the time without a job more if it is started earlier during unemployment.

Methodological challenges related to the dynamics of training

Experiments are often considered to be the gold standard for program evaluation. Classical laboratory experiments, as are common in the natural sciences, are also appealing to social scientists because the researcher has perfect control over the experimental setup, and the effectiveness of the treatment can be inferred by a simple comparison of the mean outcomes of the treated and control units. Evaluations of labor market policies are often designed and interpreted in the same way as classical laboratory experiments, even though the empirical reality may not support this approach.

In social experiments, researchers often cannot ensure compliance with the experimental protocol. It is usually not possible (nor desirable) to compel people in the treatment group to participate in the intended program from start to finish, or to deny people in the control group access to alternative programs similar to the one studied. Rather, treatment-group dropout and control-group substitution are common phenomena. Moreover, standard delays between the time of program assignment, program start, and program end mean that experimental subjects may revise their decisions on program participation and take-up of employment in-between. Thus, actual participation in training programs is the result of both the initial randomization and the dynamic decisions thereafter.

The same holds true in the absence of randomized assignment when job-seekers themselves, or the counselor at the employment agency, decide on program participation. Program start and continuation are, in general, closely linked to the labor market opportunities of job-seekers. For job-seekers as well as program administrators, re-employment is the primary goal. If the job search is unsuccessful, training becomes an attractive option to improve search effectiveness and occupational skills. In the US, where participants do not receive financial support during program participation, eligible job-seekers tend to enroll in programs when their opportunities to find employment on their own have become very low [5]. In countries with comprehensive support systems for the unemployed, such as Germany and the Nordic countries, job-seekers repeatedly meet the counselor at the employment agency. If they fail to find a job, they are eventually assigned to an active labor market program [6], [7]. Similarly, program participants may drop out for reasons related to their labor market opportunities.

As a consequence, care has to be taken in both the design and interpretation of evaluation studies. First, social experiments of training programs often do not determine the effect of “training” against the alternative of “no training,” because not all subjects in the treatment group take part in the intended program, and some subjects in the control group obtain similar services elsewhere. Thus, the effect determined via a social experiment often corresponds to the effect of “having the option to participate in the intended program,” against the alternative of “potentially participating in a substitute program.” Similarly, some non-experimental methods focus on the effect of “starting training at a given point in time,” against the alternative of “not starting training at that point in time,” which involves the option of future participation [7]. Research for Germany documents that the effect of “training now versus not now, but potentially in the future,” underestimates the effect of “training versus no training” by about 30% [8]. This means that training impacts may appear low just because the alternative against which training is evaluated involves a similarly effective treatment at the same point in time, or in the future.

Second, while the early evaluation literature casts doubt on the credibility of non-experimental evaluations, later research has demonstrated that a general skepticism of non-experimental evaluations is not warranted. However, care is again needed in the design of non-experimental evaluations in order to avoid comparisons between treatment and control group units who differ in systematic ways other than the participation status.

Considering the arguments outlined above, among the job-seekers who end up participating in training programs, those whose search efforts were unsuccessful tend to be over-represented, while among non-participants the successful job-seekers tend to be over-represented. Thus, a naïve comparison of labor market outcomes of participants and non-participants is likely to be biased towards finding a negative effect of training. Several studies for the US document that failure to align treatment and comparison group units in their recent earnings and employment histories contributes substantially to the bias of naïve, non-experimental methods [9], [10].

Evidence for Germany shows that evaluation results are highly sensitive to the way in which non-experimental methods take into account the unemployment experience of treatment and comparison group units [11]. Failure to align treatment and comparison group units in their unemployment experience prior to the program start, results in impact estimates that are biased downward by a substantial amount [11]. This bias is exacerbated by the fact that training participants are similar to successful job-seekers in terms of their demographic characteristics. Dynamic evaluation methods that model transitions between different labor market states have proven useful in aligning treated and comparison units in their labor market histories.

Third, some important questions of interest to researchers and policymakers cannot be addressed with standard research designs in which program participation is modeled as a “one-time decision,” and impacts are measured by cross-sectional comparisons of labor market outcomes. Static designs cannot be used to study dynamic issues that involve repeated decisions on training participation and take-up of employment over time. Examples of dynamic questions include:

What is the optimal sequence of training programs? What is the optimal duration of training programs? What is the optimal timing of training during the period of unemployment? What is the effect of training on the remaining unemployment duration? What is the effect of training on the stability of subsequent periods of employment?

What is necessary in order to tackle dynamic evaluation questions such as these are methods that model the time spent in different labor market states and the transitions between them [1], [4], [6], [8], [12].

Summary assessment of training programs

The above methodological issues have contributed to a rather pessimistic view among researchers as well as policymakers, on the effectiveness and efficiency of training programs. For instance, the youth training programs under the US Job Training Partner Ship Act (JTPA) were cut by over 80% following a negative assessment in the National JTPA Study, a large-scale social experiment. A re-analysis shows that the experimental impact estimates were so low due to the large fractions of treatment-group dropout and control-group substitution [13]. After correcting for dropout and substitution bias, however, the picture changed, suggesting that the net returns to the participants are actually large.

A cost–benefit assessment for job-search training and occupational skills training in Germany, based on a fully dynamic analysis, also reaches a more positive conclusion [1]. Occupational skills training costs almost €5,850 per participant in Germany, which is a common figure by international comparison. In contrast, job-search training costs €560 per participant. In addition to this, the employment agency pays for unemployment compensation and social security contributions, totaling €1,050 per job-seeker, per month. Job-search training shortens unemployment, which corresponds to a reduction in the associated transfer payments of approximately €1,850 [1]. In addition, job-search training has no adverse effects on employment stability and earnings. This suggests that job-search training is cost-effective from a fiscal point of view.

The cost–benefit assessment for occupational skills training is less clear-cut, as it initially prolongs unemployment but then increases employment stability and earnings. With the additional benefit payments during the extra time in unemployment, a long-term training course results in approximately €9,000 of additional costs to the German taxpayer, compared with the situation of non-participation [1]. The substantial positive effects on employment stability and earnings suggest that occupational skills training effectively increases the productivity of job-seekers to a similar extent as initial education. Therefore, it seems reasonable that, in the long term, occupational skill training “pays off” from a fiscal point of view, as the improved employment and earnings outcomes translate into lower transfer payments out of the unemployment insurance system and a higher labor-tax revenue. From the perspective of the job-seeker, higher earnings imply that their anticipated future potential income, and thus their lifetime welfare, increases more with long-term than with short-term training, provided that unemployment compensation during the initial lock-in phase is sufficiently high.

Limitations and gaps

Many important evaluation questions on the dynamics of training cannot be addressed with standard static research designs. Recent research has contributed to the advancement of dynamic evaluation approaches. The empirical evidence on the dynamic effects of training is, however, still limited. Existing studies often lack a comprehensive approach regarding the range of programs and outcomes considered. Evidence on the effect of the timing and sequencing of training in connection with different course contents is also scarce.

Future research could explore designs with repeated random assignment, as well as combined experimental and non-experimental approaches. Moreover, an accurate cost–benefit assessment of different types of training would require follow-up periods as long as ten or 20 years. Such evidence is missing to date.

Summary and policy advice

Training programs are an important, but controversial, instrument of active labor market policy. Early evaluation studies cast doubt on the effectiveness of long-term, skills-oriented programs in particular. The basis of this pessimistic view is rooted in a misperception of the dynamics of training programs. On the one hand, training participation is the outcome of a dynamic decision process that responds to the evolution of job opportunities. On the other hand, the impacts of training programs change dynamically over time.

For job-seekers, as well as for training-program administrators, stable and long-term re-employment is the primary goal. Training becomes an attractive option when the job search is unsuccessful. Thus, unsuccessful job-seekers tend to be over-represented among participants in training. In non-experimental evaluations, failure to align treated and comparison units with their recent labor market histories can therefore lead to an underestimation of program impacts. In social experiments, standard delays between random assignment, program start, and program end, mean that experimental subjects may update and revise their decisions on program participation and take-up of employment in-between. Treatment-group dropout and control-group substitution are common phenomena. Hence, training impacts may appear low because the experimental evaluation does not compare training with no training, but considers two intermediate options, i.e. potential participation in the intended program and potential participation in a substitute program.

Concerning the time profile of training impacts, longer-term training initially leads to negative effects, as participants miss out on job opportunities while they improve their occupational skills. However, studies that consider longer follow-up periods of more than two years, and studies that estimate the effects on employment stability, suggest that skills-intensive training generates persistent long-term gains. In contrast, the beneficial effects of short, job-search-oriented training materialize instantaneously but do not persist for as long.

It is important, therefore, that policymakers are aware that while job-search-oriented training can successfully activate job-seekers in the short term, investment in skills-oriented training is more effective for addressing individual welfare issues, tackling structural mismatches between worker skills and employer demand, and improving labor market outcomes in the long term.

Acknowledgments

The author thanks an anonymous referee and the IZA World of Labor editors for many helpful suggestions on earlier drafts. The author also thanks Bernd Fitzenberger, Volker Grossmann, and Marie Paul. Previous work of the author has been used intensively in major parts of this article [1].

Competing interests

The IZA World of Labor project is committed to the IZA Guiding Principles of Research Integrity. The author declares to have observed these principles.

© Aderonke Osikominu